Warning: The following post may contain weak metaphors and bad analogies. If these are offensive to you or illegal in your jurisdiction, you may want to skip this post.

Like many people who grew up in the forward thinking, happy go-lucky days of the twentieth century when anything seemed possible, I sometimes wonder what happened to all the futuristic luxuries that technology was supposed to have delivered by now. Science fiction promised us flying cars, ray guns, personal robots, and space colonies. I can understand speculative fiction not living up to reality -- after all, nobody has a crystal ball. However, many of us who were involved in software during the early to mid-nineties had some very specific and realistic notions of how computing technology would progress over the coming years, including the following:

- Ubiquitous computing. Computers would fade into the woodwork all around us, and human-computer interaction would take place using natural metaphors instead of clunky terminals. In some ways we're slowly moving in that direction, as powerful computers become embedded into mobile phones and other handheld devices, but we're not there yet.

- Transparent cryptography. Cryptography would be seamlessly integrated into our day-to-day communications. Today, you can use cryptography to communicate with your bank's web site or connect to your office's network in a fairly seamless fashion, but the vision was much broader than this -- all network messaging (email, instant messaging, twitter, etc.) would be encrypted to protect the contents from prying eyes, and digitally signed to provide strong assurance of the authenticity of the sender.

- Natural language processing. By this time we should have been able to verbally interact with our ubiquitous computing infrastructure, asking our computers to "turn off the coffee pot that I accidentally left on," "check on the status of my portfolio," "open the pod bay doors," etc. At present, natural language technology still seems clunky and limited.

- Intelligent agents, micropayments, effective and low-cost telepresence, and many others.

Some of the above ideas exist today in a limited fashion, although none have lived up to the potential that we once envisioned. Some ideas require the overcoming of difficult challenges (such as the ideas related to artificial intelligence: natural language processing and intelligent agents), and others are technically solved to a large degree but have not been refined enough to be successful in consumer applications (transparent cryptography and micropayments, for example). I've been mulling over a theory about why these technologies have either failed to become reality, or haven't lived up to our expectations.

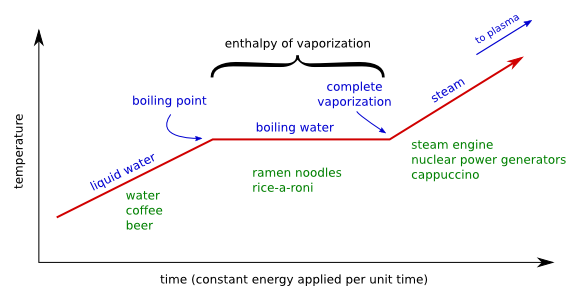

In the late twentieth century, technology went through a period of great advancement in capability which outpaced the application of these capabilities as solutions to problems. An entrepreneur who is investigating ideas for a startup company will find a great many tools available, and many unsolved problems. Using existing tools to solve existing problems can be very lucrative, and the entrepreneur will likely chose to deliver these solutions instead of developing new tools. Because there is a finite (although not fixed) amount of innovative energy in the economy, this low-hanging fruit is bound to be picked first. If it's 2004 and you're looking to start a company, which would be more likely to provide a larger and quicker return on investment -- solving the problem of how to richly communicate with friends using established and well-understood web tools (Facebook), or starting an artificial intelligence research and development laboratory that may not bear fruit for years? When the low-hanging fruit becomes scarce, more energy will be spent on the harder problems, and we will be back on track to building the world of tomorrow.And now, it's time for the bad analogy you've been waiting for. When I think about the temporary diversion of efforts into applications instead of new technologies, I can't seem to shake from my head a graph from high school chemistry class showing enthalpy of vaporization. (Enthalpy can be very roughly defined as the heat content of a thermodynamic system.) When you add energy (heat) to water, its temperature will increase continuously in proportion to the amount of energy added -- until it reaches the boiling point (201°F at any reasonable elevation), at which time the energy is redirected towards the "enthalpy of vaporization" (also called the "heat of evaporation") and the temperature flatlines at the boiling point until vaporization is complete and the energy can once again be used towards increasing the temperature, as seen in this graph:

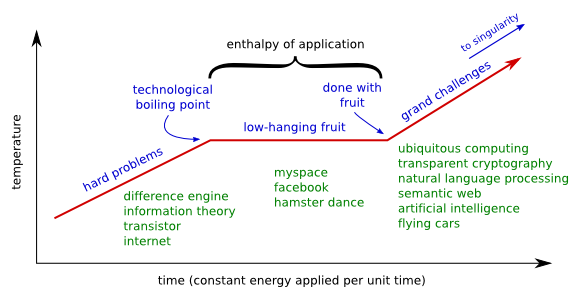

In a similar fashion, it seems that the technology industry has been in a sort of "enthalpy of application" where energy (effort) has been redirected towards application, and the technology "temperature" has not risen much:

Before anybody accuses me of being hostile towards Web 2.0 companies, let it be clear that I think putting effort into using existing tools to solve problems is just fine and dandy, and this is the natural order of things. (Even if my graphs seem to imply that Facebook is the ramen noodles of technology.) However, it never hurts to try to make sense of these trends. If the current economic downturn begins drying up the low-hanging fruit prematurely, it may be a good idea to begin looking into the grand challenges and long-term endeavors. I'm personally looking forward to working on some of these challenges in the years ahead.

posted at 2008-10-27 00:33:55 MDT

by David Simmons

tags: web2.0 whatsnext

permalink

comments